Load Balancing Strategies for High Scalability: Sticky Sessions vs. Round Robin Deep Dive

Chidinma Nwosu

November 2025

17 minute read

Introduction: The Imperative for Load Balancing in Modern Systems

In the era of cloud computing and exponential user growth, scalability and high availability are non-negotiable requirements for any successful web application. A single server is rarely enough to handle peak traffic, leading to bottlenecks, slow response times, and catastrophic failures. The solution is load balancing—the art and science of distributing incoming network traffic across a group of backend servers, often referred to as a server farm or pool.

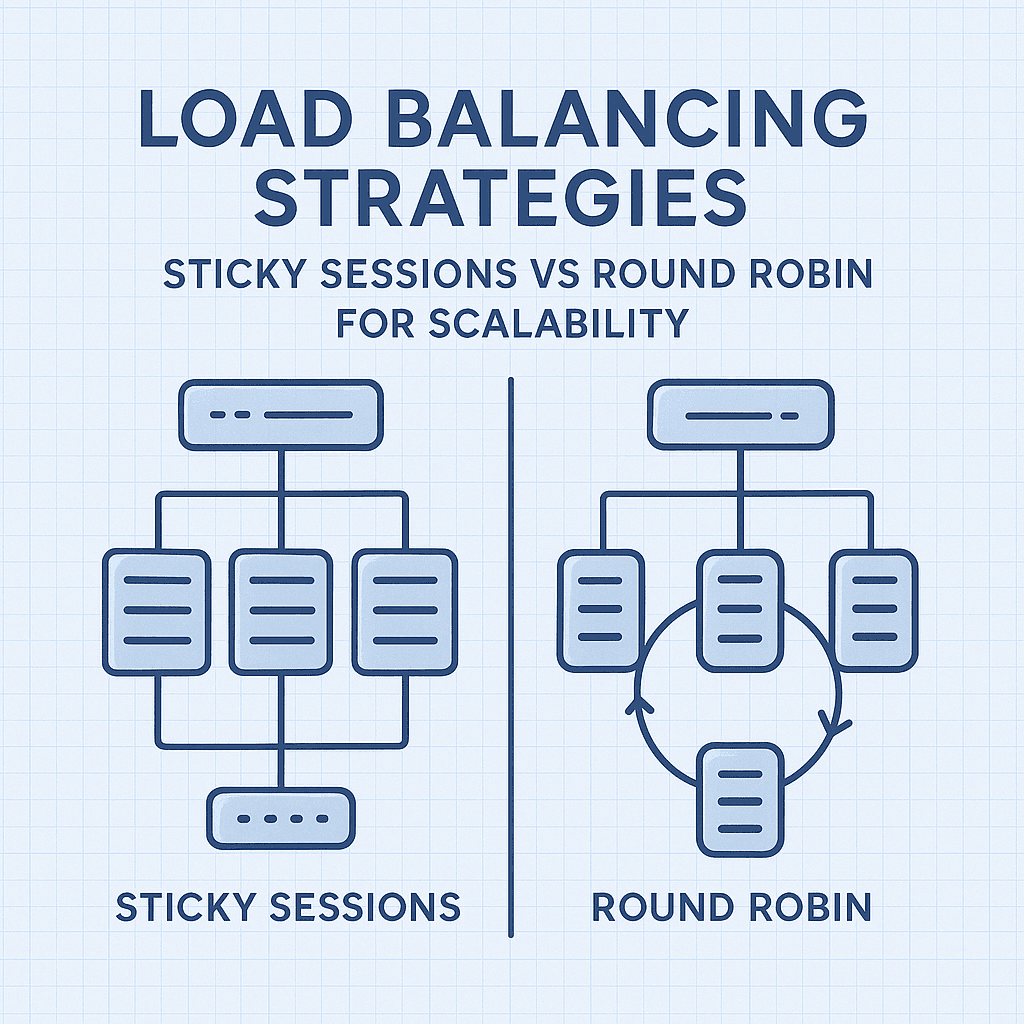

A well-chosen Load Balancing Strategy is the critical factor that determines how effectively your system handles millions of concurrent requests. Among the myriad of algorithms available, two foundational methods—Round Robin and Sticky Sessions (also known as Session Persistence)—represent a crucial architectural decision point. While Round Robin prioritizes even distribution, Sticky Sessions prioritize user experience and the maintenance of state. Understanding the trade-offs between these Load Balancing Strategies is key to optimizing performance, cost, and resilience.

This comprehensive article will provide a deep dive into both Sticky Sessions and Round Robin for scalability, detailing their mechanisms, practical implementations, and the specific scenarios where one strategy dramatically outperforms the other. We will also explore modern best practices that often mitigate the need for traditional sticky sessions.

Understanding Round Robin: The Simplest Load Balancing Strategy

The Round Robin algorithm is arguably the simplest and most intuitive of all Load Balancing Strategies. Its mechanism is straightforward: it cycles through the list of available backend servers in order, sending the first request to server 1, the second to server 2, and so on. Once it reaches the end of the list, it loops back to the beginning.

How Round Robin Works

Imagine a line of users waiting for service at a bank with multiple tellers (servers). A clerk (the load balancer) directs the first person to teller A, the second to teller B, the third to teller C, and the fourth back to teller A. This cyclical distribution ensures every server receives an equal number of requests over time. This makes Round Robin an excellent mechanism for traffic distribution.

Fair Distribution: Guarantees that all servers receive an almost identical number of requests.

Low Overhead: Requires minimal computation by the load balancer, making it extremely fast.

Simplicity: Easy to implement and manage, often the default setting in many load balancing systems (e.g., NGINX, HAProxy).

Drawbacks of Basic Round Robin for Scalability

While simple, basic Round Robin has a significant flaw related to unequal server capability and request complexity. It treats all requests and all servers equally:

Uneven Load: If server A is older and slower than server B, or if one request takes 1 second and the next takes 10 seconds, the load will not be truly balanced, leading to server A being overloaded.

No Session Awareness: This is the most critical issue. Because each subsequent request from the same user can go to a different server, applications that store session state (like user login data, shopping cart contents, or partially completed forms) directly on the server will fail. The user will lose their session data.

To address the uneven load issue, a variation called Weighted Round Robin is often used, where faster or more capable servers are assigned a higher weight and receive a proportionally larger share of the traffic.

Load Balancing

Scalability

Sticky Sessions

Round Robin

Web Architecture